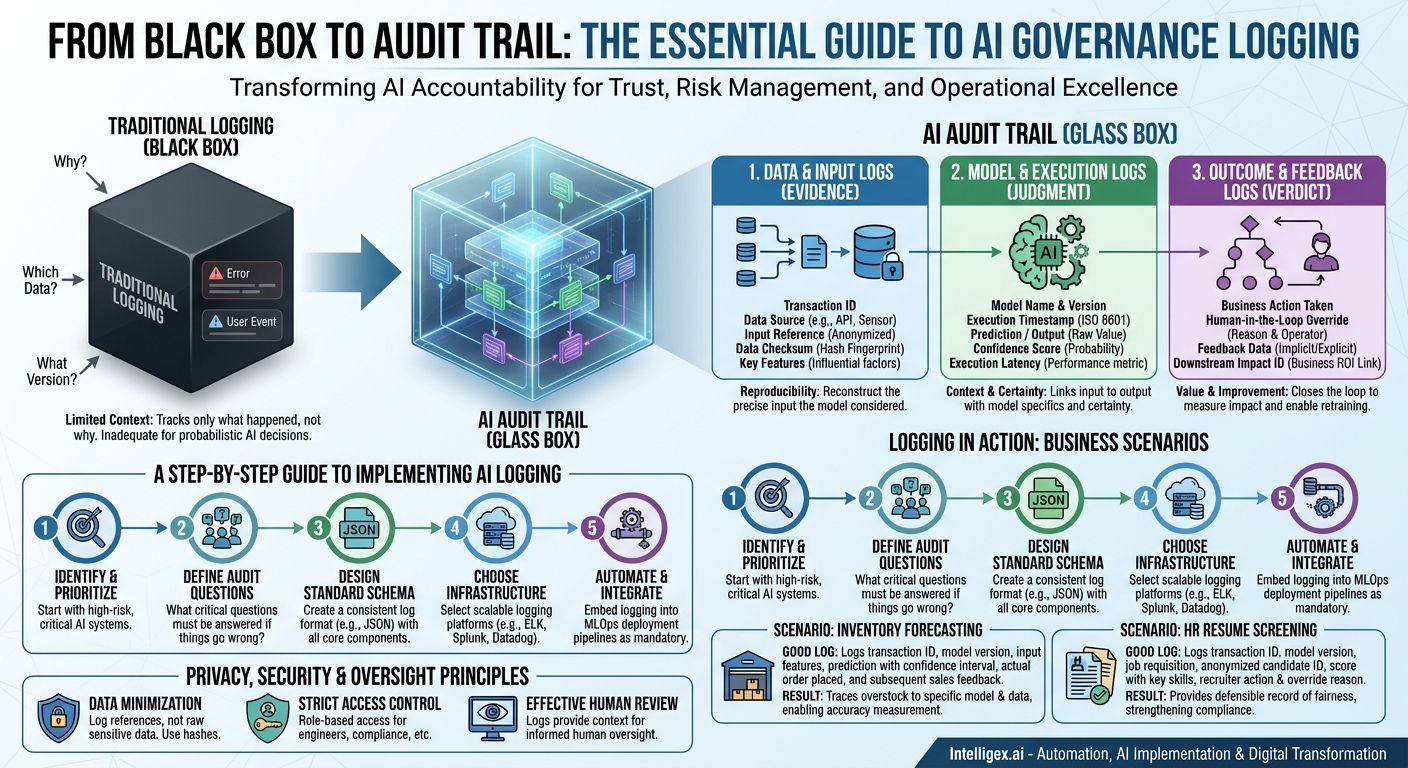

As artificial intelligence moves from experimental labs to the core of your business operations, the way we think about system accountability has to evolve. Standard application logs, which track errors and user events, are no longer sufficient. When an AI system makes a critical decision, from approving a financial transaction to prioritizing a supply chain shipment, you need to be able to answer one simple question with absolute certainty: Why?

AI governance logging is the practice of systematically recording the critical details of an AI’s decision-making process. This isn’t about creating bureaucratic hurdles. It’s about building a foundation of trust, managing risk, and enabling operational excellence. A robust audit trail allows you to debug models faster, satisfy regulatory requirements with confidence, and prove the value of your AI investments. Without it, your AI is a black box, posing a significant risk to your operations and reputation.

Why Traditional Logging Isn’t Enough for AI

Traditional software logs are event-driven. They tell you what happened: a user authenticated, a database write failed, an API call was completed. They are excellent for understanding procedural execution flows. However, they lack the context needed to understand a probabilistic decision made by an AI model.

AI systems are different because their behavior is learned from data, not explicitly programmed. The same input might produce a slightly different output if the model is retrained or updated. An audit requires insight into the model’s “state of mind” at the moment of decision. Simply logging “AI recommended option A” is useless. You need to know which version of the AI, using what specific data, came to that conclusion and with what level of certainty.

This gap creates significant business challenges:

- Lack of Visibility: When a customer asks why they were shown a specific ad or given a certain credit limit, “the algorithm did it” is not an acceptable answer. This damages customer trust and can lead to regulatory scrutiny.

- Slower Scalability: You cannot confidently scale an AI system across the enterprise if you cannot explain its decisions. Each new deployment becomes a new source of unmanaged risk, slowing down your digital transformation efforts.

– Increased Costs: Without detailed logs, debugging a faulty AI model is a nightmare. Data scientists and engineers waste valuable time trying to reproduce an issue instead of analyzing a clear record of the problematic transaction.

The Core Components of an AI Audit Log

To build a comprehensive audit trail, your logs must capture the full lifecycle of an AI-driven decision. Think of it as documenting the evidence, the judgment, and the verdict. We can break this down into three fundamental pillars.

1. Data and Input Logs

This is the “evidence” portion of your log. It details exactly what information the model considered before making its decision. The goal here is reproducibility. An auditor or data scientist should be able to look at this log and reconstruct the precise input that the model received.

What to capture:

- Transaction ID: A unique identifier that links all related logs (input, execution, outcome) for a single decision.

- Data Source: Where did the input data come from? (e.g., `crm-api`, `user-form-submission`, `daily-iot-feed`).

- Input Data Reference: A pointer to the input data, not the raw data itself, especially if it contains Personally Identifiable Information (PII). This could be a customer ID, an invoice number, or a support ticket ID.

- Data Checksum/Hash: A cryptographic hash (like SHA-256) of the input data. This provides a tamper-proof fingerprint to verify that the data has not been altered since it was processed.

- Key Features: Log the most influential features used by the model, but avoid logging the entire data payload. For a loan application model, you might log `credit_score_range: ‘700-750’` and `debt_to_income_ratio: 0.35` but not the applicant’s name or address.

2. Model and Execution Logs

This is the “judgment” record. It captures the specifics of the AI model that performed the analysis and what it concluded. This is arguably the most critical part of an AI audit log, as it provides the direct link between an input and an output.

What to capture:

- Model Name and Version: This is non-negotiable. You must log the exact model and version that made the prediction (e.g., `lead-scorer-v2.1.4-prod`). Models are constantly retrained, and their behavior changes.

- Execution Timestamp: An ISO 8601 formatted timestamp of when the prediction was made.

- Prediction or Output: The raw output from the model. This could be a class label (“Fraudulent”), a score (98.5), a forecast ($50,000), or a snippet of generated text.

- Confidence Score: For classification models, this is the probability the model assigned to its chosen prediction. A decision made with 99% confidence is very different from one made with 51% confidence. This context is vital for risk assessment.

- Execution Latency: How long did the model take to produce the output? This is a key operational metric for monitoring performance and cost.

3. Outcome and Feedback Logs

This is the “verdict.” It closes the loop by recording what happened as a result of the AI’s recommendation. This log is essential for measuring business value, monitoring for performance degradation, and gathering data for future model retraining.

What to capture:

- Business Action Taken: The final action implemented by the system or human operator (e.g., `invoice_approved`, `user_escalated_to_support`, `discount_applied`).

- Human-in-the-Loop Override: If a person reviewed the AI’s suggestion, log their decision. Did they accept or reject it? (e.g., `override: true`, `reason: ‘incorrect customer context’`, `operator_id: ‘j.doe’`).

- Feedback Data: Capture direct or indirect feedback. This could be a customer clicking on a recommended product (implicit feedback) or a user rating a summary as “helpful” (explicit feedback).

- Downstream Impact ID: When possible, link the AI decision to a downstream business event. For example, link a sales lead score to the eventual Salesforce Opportunity ID that was created. This directly connects AI activity to business ROI.

A Step-by-Step Guide to Implementing AI Logging

Establishing a robust logging framework does not have to be an overwhelming, multi-year project. By taking a methodical approach, you can build a scalable and effective system that delivers immediate value.

- Identify and Prioritize Your AI Systems. Not all AI models carry the same level of risk. A model that recommends articles for an internal knowledge base has a much lower risk profile than one involved in medical diagnostics or financial underwriting. Categorize your models (e.g., high, medium, low risk) and start with your most critical, high-risk system first.

- Define Your Audit Questions. Before writing a single line of code, assemble a team including business owners, legal counsel, and engineers. Ask them: “If something goes wrong with this AI, what are the top five questions you will need answered immediately?” Common questions include: “Why was this specific customer denied?” or “Which model version was active during last week’s outage?” Use the answers to define your required log fields.

- Design a Standardized Log Schema. Consistency is key. Create a standardized, structured logging format (like JSON) that all data science and engineering teams must use. This prevents fragmented, unusable logs. Your schema should include the core components discussed above: a unique transaction ID, input references, model version, output, confidence score, and outcome.

- Choose the Right Logging Infrastructure. Your logs need a home. For low-volume systems, structured text files sent to a service like AWS CloudWatch or Google Cloud Logging might suffice. For high-volume, critical applications, a centralized logging platform like Datadog, Splunk, or an ELK (Elasticsearch, Logstash, Kibana) stack is more appropriate. These tools provide powerful search, visualization, and alerting capabilities crucial for effective auditing. Consider the cost, scalability, and data residency requirements when making your choice.

- Automate and Integrate into Your MLOps Pipeline. Logging should be a mandatory, automated part of your model deployment process. Integrate logging libraries directly into your model serving templates and APIs. Make it impossible to deploy a model without proper logging enabled. This ensures that governance is built-in, not bolted on as an afterthought. You can explore tools within MLOps platforms like Amazon SageMaker or Azure Machine Learning to help automate this process.

Logging in Action: Scenarios from the Business

Let’s move from theory to practice. Here is how robust AI logging provides tangible value across different business functions.

Scenario: Supply Chain Inventory Forecasting

An AI model predicts daily inventory needs for a national retail chain to prevent stockouts.

- Bad Logging: `Timestamp: 2023-10-26, SKU: 12345, Prediction: 500 units`

- Good Logging:

- `transaction_id: “abc-123″`

- `model_version: “inventory-forecast-v3.2″`

- `input_features_ref: “sku-12345-daily-features-20231025.csv”`

- `prediction: {units: 500, confidence_interval: [450, 550]}`

- `outcome: {order_placed: 500, by: ‘automated_system’}`

- `feedback: {actual_sales_24hr: 485, stockout_event: false}`

- Business Value: When a manager asks why a particular store was overstocked, the team can immediately trace the forecast back to the exact model version and input data used. The feedback log of actual sales is then used to automatically measure forecast accuracy, driving down holding costs and improving fulfillment quality.

Scenario: HR Resume Screening

An AI tool helps recruiters by scoring and prioritizing incoming resumes for a software engineering role.

- Bad Logging: `CandidateID: 9876, Score: 85`

- Good Logging:

- `transaction_id: “def-456″`

- `model_version: “resume-screener-swe-v1.1″`

- `job_requisition_id: “54321-SWE”`

- `candidate_id_anonymized: “xyz-789″`

- `prediction: {score: 88.2, key_skills_identified: [‘python’, ‘aws’, ‘sql’]}`

- `outcome: {action: ‘shortlisted_for_review’, recruiter_id: ‘s.jones’}`

- `feedback: {recruiter_decision: ‘advanced_to_interview’, reason_code: ‘strong_project_experience’}`

- Business Value: This detailed log provides a defensible record of the hiring process. If questions arise about fairness or bias, HR can demonstrate that decisions were based on specific, job-relevant skills identified by a consistent model. This speeds up hiring while strengthening compliance and reducing legal risk.

A Note on Privacy, Security, and Human Oversight

While comprehensive, your logs must not become a security liability. Implementing AI governance requires a security-first mindset. Follow three simple principles.

1. Practice Data Minimization. Your logs should never contain raw sensitive data. Log a `customer_id`, not the customer’s name, address, and phone number. Log a `transaction_hash`, not the full credit card number. The goal is to record enough information to trace a decision back to the source data, without duplicating that sensitive data in your logging system.

2. Enforce Strict Access Control. Not everyone in your organization needs to see the detailed logs of your AI systems. Access to logging platforms should be role-based and tightly controlled. An engineer debugging performance issues needs different access than a compliance officer auditing for fairness. Ensure access is logged and monitored.

3. Enable Effective Human Review. Good logging is the bedrock of a successful “human-in-the-loop” process. When an AI flags a transaction for manual review, the human expert needs context to make an informed decision. The logs provide that context instantly: which model made the call, what data it saw, and how confident it was. This empowers your team to act as effective supervisors of the AI, building trust and ensuring accountability.

For organizations seeking a structured approach to managing AI risks, frameworks like the NIST AI Risk Management Framework provide excellent, vendor-neutral guidance that aligns with these principles.

Your Next Steps to a Defensible AI

Building a complete AI audit trail is a journey, not a destination. The key is to start now with a focused, value-driven approach. Don’t aim for perfection on day one. Aim for progress.

- Select a single, high-impact AI system. Choose one model that is critical to your business but manageable in scope. This could be a lead scoring model in your sales department or a fraud detection system in finance.

- Define the essential audit questions for that system. Work with the business owner, IT, and a compliance representative to identify the absolute must-have information needed to explain a decision.

- Implement the core logging components. Ensure you are capturing, at a minimum, the model version, a reference to the input data, the model’s output including a confidence score, and the final business outcome.

By taking these concrete steps, you transform AI from a mysterious black box into a transparent, auditable, and ultimately more valuable business asset. You build the operational muscle needed to scale AI confidently, manage risk proactively, and accelerate your digital transformation journey.

Category:

Get a FREE

Proof of Concept

& Consultation

No Cost, No Commitment!