Every customer support team wants to deliver a great experience, every single time. But “great” can be subjective. What one agent considers a resolved ticket, a customer might see as a temporary fix. What one team member views as a friendly tone, another might perceive as unprofessional. This inconsistency is the silent killer of customer satisfaction. Without a system to define, measure, and improve the quality of your support interactions, you’re essentially flying blind, hoping for the best but unable to guarantee it. This is where a Quality Assurance (QA) review process comes in—not as a complex, bureaucratic nightmare, but as a simple, powerful tool for coaching, consistency, and continuous improvement.

Many teams shy away from implementing a QA process because they imagine it requires expensive software, a dedicated team of analysts, and a mountain of spreadsheets. The truth is, you can start with a straightforward, lightweight system that delivers immense value. This guide is designed to give you exactly that: a simple, actionable framework for building a support QA review process from the ground up, focusing on what truly matters: helping your team and your customers succeed.

What is a Support QA Review, Really? (And Why You Can’t Afford to Skip It)

At its core, a support QA review process is a system for methodically reviewing a sample of customer interactions (like emails, chats, or phone calls) against a predefined set of standards. It’s not about catching agents making mistakes or punishing them for low scores. Instead, think of it as a coaching and development tool. It’s the equivalent of a sports team reviewing game tapes to see what went right, what went wrong, and how they can play better together in the next match.

A well-implemented QA process moves your team from anecdotal feedback (“I feel like we’re doing a good job”) to data-driven insights (“We’ve improved our first-contact resolution by 15% this quarter by focusing on more thorough discovery questions”). The benefits are profound and touch every aspect of your support operation:

- Drives Consistency: It ensures that regardless of which agent a customer interacts with, they receive a consistent level of service that aligns with your brand’s voice and standards. This builds trust and predictability.

- Powers Agent Development: QA provides specific, actionable feedback that helps agents understand their strengths and identify areas for growth. It’s the most effective way to provide personalized coaching that actually sticks.

- Uncovers Hidden Insights: Are customers constantly confused about a specific feature? Is a help center article misleading? QA reviews are a goldmine for identifying recurring product issues, gaps in your knowledge base, and broken internal processes that cause friction for both customers and agents.

- Boosts Customer Satisfaction (CSAT): There is a direct correlation between the quality of support interactions and customer happiness. By systematically improving interaction quality, you will inevitably see a positive impact on your CSAT, NPS, and other customer-centric metrics.

- Justifies Team Needs: When you can present data showing that 30% of support tickets are related to a single, confusing part of your product, you have a much stronger case for requesting resources from the product or engineering teams to fix it. QA data is evidence.

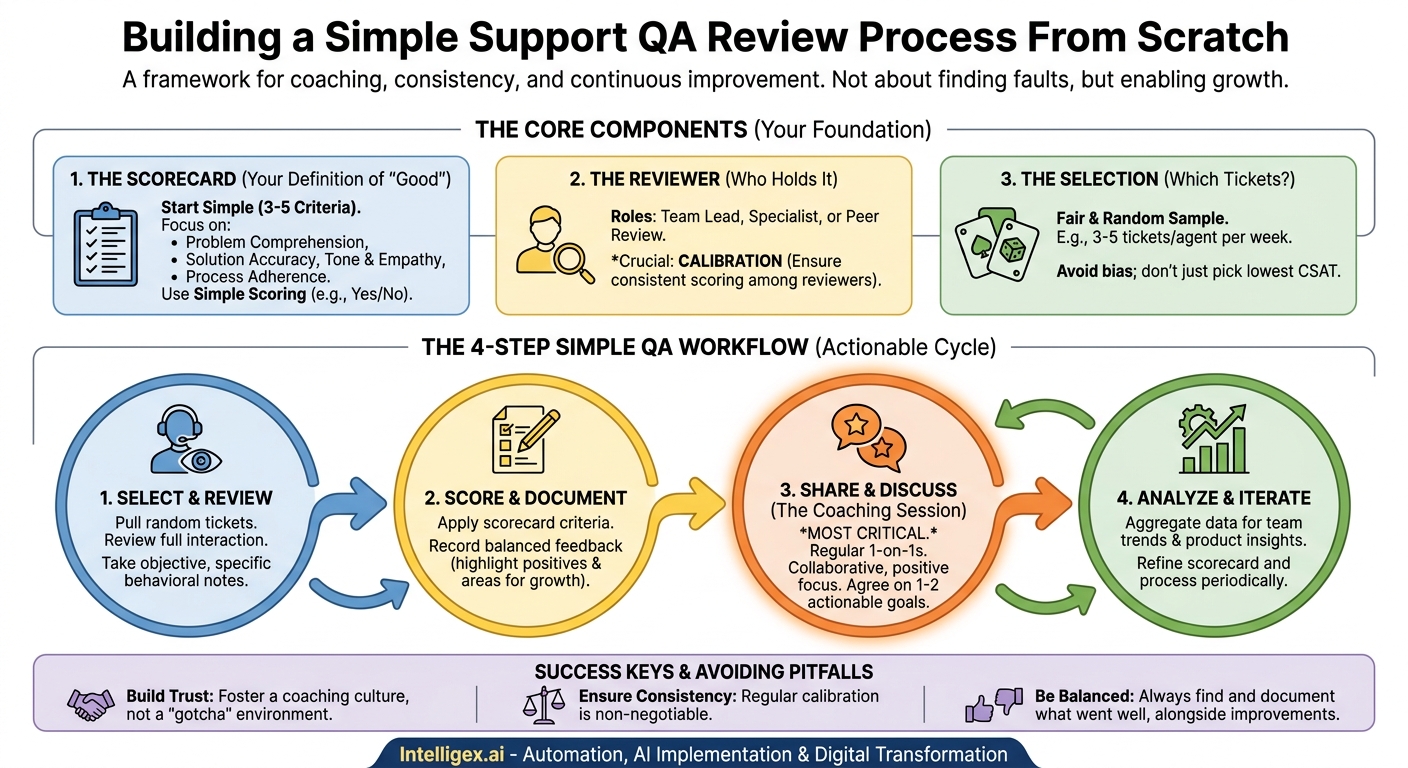

The Core Components of a Simple QA Process

To avoid getting bogged down in complexity, let’s focus on the three essential pillars of any effective QA program. Get these right, and you’ll have a solid foundation to build upon.

1. The Scorecard: Your Definition of “Good”

The scorecard, or rubric, is the heart of your QA process. It’s a simple checklist of criteria that you use to evaluate each interaction. The golden rule here is to start simple. You can always add more complexity later, but beginning with a massive, 50-point checklist is a recipe for failure. A good starting scorecard might have just 3-5 core categories.

Key Categories for a Starter Scorecard:

- Problem Comprehension: Did the agent accurately understand the customer’s core problem? Did they ask clarifying questions if needed, or did they jump to a solution based on a keyword?

- Solution Accuracy & Completeness: Was the information provided correct? Did it fully resolve the customer’s issue, or did it only address part of it, leading to a follow-up ticket?

- Tone & Empathy: Was the agent’s communication professional, polite, and empathetic? Did they acknowledge the customer’s frustration (if any) and align themselves with the customer’s goal?

- Process Adherence: Did the agent follow critical internal processes? For example, did they correctly tag the ticket for reporting, use the right macro, or document the issue in the CRM? This category helps keep your internal data clean.

For scoring, forget complex weighting and percentages at first. Start with a simple binary (Yes/No) or a three-point scale (e.g., Meets Expectations, Exceeds Expectations, Needs Improvement) for each category. The goal isn’t a granular score; it’s a clear signal for a coaching conversation.

2. The Reviewer: Who Holds the Scorecard?

You need someone to conduct the reviews consistently. In a small team, this responsibility usually falls to one of a few roles:

- Team Lead or Manager: This is the most common and often most effective starting point. The manager is already responsible for team performance and coaching, so QA fits naturally into their role.

- Dedicated QA Specialist: As the team grows, you might hire someone specifically for this role. They can bring a level of objectivity and focus that a busy manager might lack.

- Peer Reviews: In this model, agents review each other’s tickets. This can be fantastic for fostering a culture of shared ownership and learning. However, it requires significant training and careful management to avoid personal bias and ensure consistency. It’s often best introduced after a manager-led process is well-established.

The most important factor, regardless of who does the reviewing, is calibration. A calibration session is when all reviewers (or just the manager, if it’s one person) score the same ticket and then discuss their reasoning. This ensures everyone is interpreting the scorecard in the same way. Inconsistent scoring is one of the quickest ways to erode an agent’s trust in the QA process.

3. The Selection: Which Tickets to Review?

You can’t review every ticket, so you need a fair method for selecting a representative sample. The goal is to get a holistic view of an agent’s performance, not just to cherry-pick their worst interactions.

The simplest and fairest method is random selection. Commit to reviewing a set number of tickets per agent, per week or month. For example, you might decide to review 3-5 tickets per agent each week. Use a random number generator or simply pull the 3rd, 7th, and 11th tickets they handled on a given day. This removes bias and provides a much more accurate picture of their typical performance.

Avoid the temptation to only review tickets with low CSAT scores. While those are important to analyze, they don’t represent the full body of work and can create a negative feedback loop where agents only hear about their mistakes.

The 4-Step Simple QA Workflow in Action

Now that you have the components, let’s put them together in a simple, repeatable workflow.

Step 1: Select & Review

Based on your chosen selection method (e.g., 3 random tickets per agent), pull the conversations for review. Read or listen to the entire interaction from start to finish to understand the full context. As you review, evaluate the interaction against each category on your scorecard. Take objective notes, focusing on specific behaviors and quoting parts of the conversation. For example, instead of writing “agent was rude,” write “Agent’s response ‘As I already told you…’ could be perceived as dismissive. A better alternative would be ‘I can see how that part is confusing, let me clarify…'”

Step 2: Score & Document

Fill out the scorecard for the interaction. Add your constructive comments and specific examples directly into your documentation, whether it’s a simple spreadsheet or a dedicated QA tool. Remember to highlight what the agent did well! Positive reinforcement is just as important as constructive feedback. A great review is balanced, recognizing both strengths and opportunities for improvement.

Step 3: Share & Discuss (The Coaching Session)

This is the most critical step in the entire process. A score without a conversation is meaningless and can be demoralizing. Schedule a regular 1-on-1 meeting with each agent to discuss their QA results. Frame these meetings as collaborative coaching sessions, not performance reviews.

- Start with the positive. Begin the conversation by highlighting a ticket where they did an excellent job.

- Discuss opportunities collaboratively. Instead of telling them what they did wrong, ask questions. “I noticed on this ticket the customer seemed a bit confused by the first answer. Walk me through your thought process there. How could we make that clearer next time?”

- Focus on one or two key takeaways. Don’t overwhelm the agent with a laundry list of ten things to fix. Agree on one specific, actionable goal for them to focus on before your next meeting.

Step 4: Analyze & Iterate

The final step is to zoom out. Once a month or once a quarter, aggregate the data from all your reviews. Look for trends across the entire team. Are many agents struggling with the same category on the scorecard? This might not be an individual agent issue, but a team-wide training gap. Are a high number of tickets related to a single product bug? This is valuable data to share with your product team.

This big-picture analysis turns your QA process from a simple agent-level coaching tool into a strategic engine for improving the entire customer experience. It also allows you to iterate on your QA process itself. Is a scorecard category proving unhelpful? Remove it. Is a new type of issue emerging? Add a category for it.

Avoiding Common Pitfalls

As you roll out your process, be mindful of these common traps:

- The “Gotcha” Culture: If agents feel QA is only there to find their mistakes, they will become defensive and disengaged. From day one, relentlessly communicate that the process is for coaching and development. Celebrate high scores and share excellent ticket examples with the whole team.

- Inconsistent Scoring: If an agent feels their score depends more on the reviewer’s mood than their actual performance, they will lose trust. This is why calibration is non-negotiable.

- Focusing Only on the Negative: A QA review should be a balanced assessment. Make it a rule to find and document something the agent did well on every single ticket you review.

Your Journey to Excellence Starts Now

Implementing a support QA review process doesn’t have to be an intimidating project. By starting with a simple scorecard, a clear workflow, and a relentless focus on coaching over criticism, you can build a powerful system for elevating your team’s performance. You will create a culture of excellence, provide your agents with a clear path for professional growth, and, most importantly, deliver a consistently fantastic experience that turns customers into loyal advocates.

Don’t wait for the perfect software or the perfect scorecard. Start small, be consistent, and listen to your team’s feedback. The journey from good to great support begins with that first simple review.

Category:

Get a FREE

Proof of Concept

& Consultation

No Cost, No Commitment!