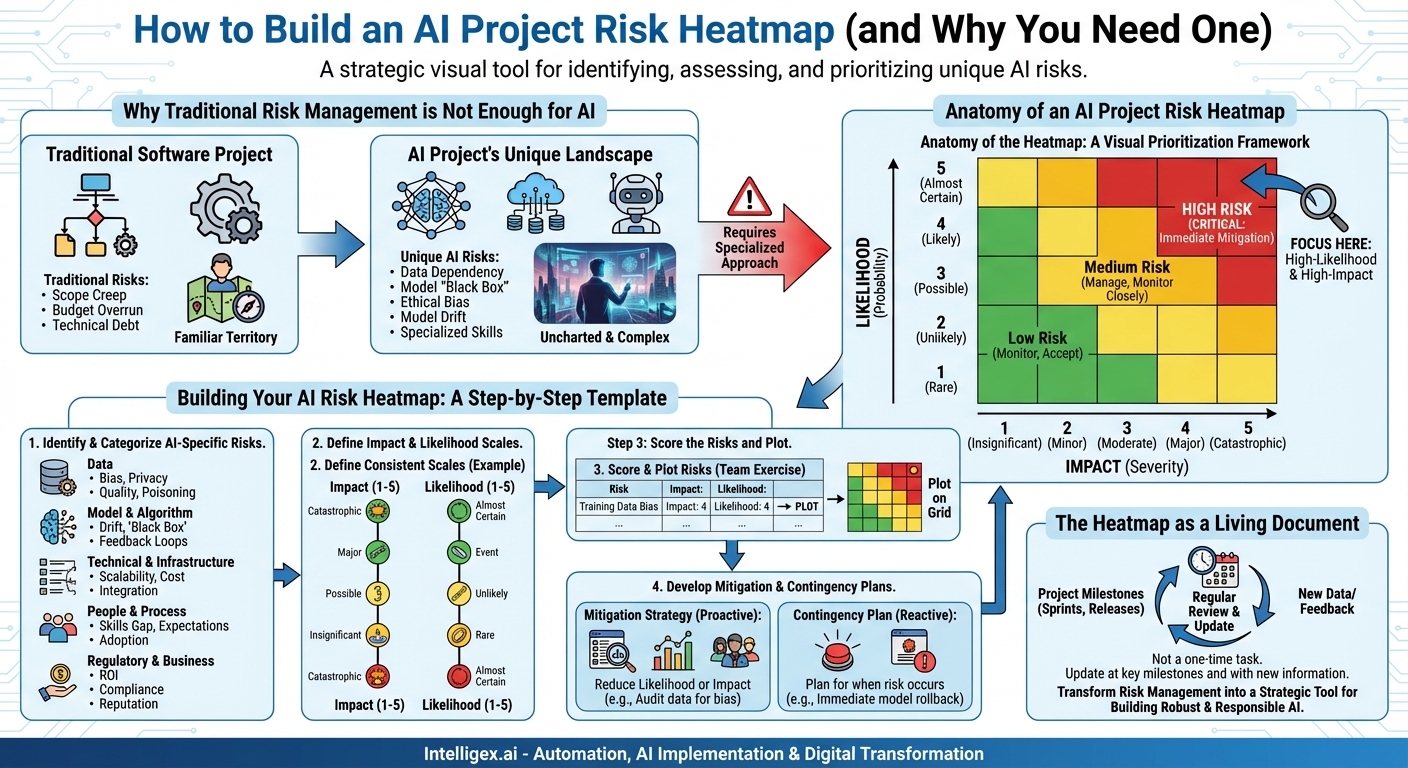

The race to implement Artificial Intelligence is on. Across industries, organizations are scrambling to leverage AI for everything from optimizing supply chains to personalizing customer experiences. This excitement is well-founded; the potential for innovation and competitive advantage is immense. However, beneath the surface of this transformative technology lies a landscape fraught with unique and complex risks. AI projects are not simply larger software projects; they are a different breed, and treating them as such is the first step toward success. Traditional risk management frameworks, while valuable, often fall short of capturing the nuanced challenges of AI. This is where a specialized tool becomes not just helpful, but essential: the AI Project Risk Heatmap.

This simple yet powerful visual tool helps teams identify, assess, and prioritize the specific risks inherent in AI development. It transforms abstract fears into a concrete, actionable plan, enabling teams to navigate the turbulent waters of AI implementation with confidence. By mapping potential pitfalls, you can proactively build guardrails, allocate resources effectively, and ensure your project delivers on its promise without succumbing to common, and often costly, failures.

Why Traditional Risk Management Isn’t Enough for AI

If you’ve managed a complex software project, you’re familiar with risk registers and mitigation plans. You track budget overruns, scope creep, and technical debt. While these are all still relevant, AI introduces entirely new categories of risk that can have far-reaching consequences. Sticking solely to a traditional approach is like trying to navigate a new city using an old map—you’ll miss the new roads, the unexpected roadblocks, and the one-way streets.

The unique challenges of AI stem from its core components: data, models, and the probabilistic nature of its outputs.

- Data-Dependent Risks: Unlike traditional software where logic is explicitly coded, AI models learn their logic from data. This makes them profoundly susceptible to the quality of that data. Biased data leads to biased and unfair models. Poor quality data results in poor performance. Insufficient data means the model can’t generalize. Furthermore, the use of vast datasets, often containing personal information, magnifies privacy and security risks exponentially.

- Model and Algorithmic Risks: The very nature of some advanced models, particularly deep learning networks, can be a risk. The “black box” problem, where even the creators cannot fully explain a model’s specific decision-making process, poses significant challenges for accountability and debugging. Another critical risk is “model drift,” where a model’s performance degrades over time as the real-world data it encounters diverges from the data it was trained on.

- Ethical and Reputational Risks: An AI system that makes unfair loan decisions, displays racial or gender bias, or results in a safety-critical failure can cause irreparable harm to a company’s reputation and bottom line. These ethical considerations are not edge cases; they are central risks that must be managed from day one.

- Human and Organizational Risks: AI projects demand a unique blend of skills that are in high demand. A critical skills gap can derail a project. Equally dangerous are misaligned expectations from stakeholders who may view AI as a magic bullet, leading to poorly defined goals and inevitable disappointment. User adoption can also be a major hurdle if end-users don’t trust or understand the AI-powered tools they are given.

Anatomy of an AI Project Risk Heatmap

A risk heatmap is a visual tool used to represent risks in a way that is instantly understandable. It’s a grid that plots risks along two axes: Likelihood (the probability of the risk occurring) and Impact (the severity of the consequences if it does). The combination of these two factors determines the overall risk level, which is typically represented by a color.

The goal is simple: to draw everyone’s attention to the top-right corner of the map. These are the high-likelihood, high-impact risks that require immediate and robust mitigation strategies.

The color-coding system provides an at-a-glance prioritization framework:

- Red (High Risk): These are your most critical threats (e.g., high likelihood, major impact). They demand immediate attention, dedicated resources, and a comprehensive mitigation plan. These are the risks that can single-handedly derail your project or cause significant business harm.

- Yellow/Amber (Medium Risk): These risks are significant and must be managed, but they may not require the same level of urgency as the red-zone risks. Mitigation plans should be developed and the risks monitored closely.

- Green (Low Risk): These are risks with low likelihood and/or low impact. While they shouldn’t be ignored entirely, they can often be accepted or managed with minimal effort, allowing the team to focus on more pressing issues.

The power of the heatmap lies in its ability to facilitate communication. A well-constructed heatmap can convey the entire risk landscape of a complex AI project to a non-technical executive in a matter of seconds. It creates a shared understanding and a common language for discussing and prioritizing risk across different teams, from data science and engineering to legal and business leadership.

Building Your AI Risk Heatmap: A Step-by-Step Template

Creating a heatmap is a collaborative exercise, not a solo task. It requires input from a diverse group of stakeholders to be truly effective. Here’s a practical, step-by-step guide to building and using your own.

Step 1: Identify and Categorize AI-Specific Risks

The first step is brainstorming. Gather your team—data scientists, ML engineers, product managers, domain experts, and even representatives from legal or compliance—and identify as many potential risks as possible. To structure this process, group them into logical categories. Here are some essential categories for any AI project:

- Data Risks:

- Systemic bias in training data leading to discriminatory outcomes.

- Violation of data privacy regulations (e.g., GDPR, CCPA).

- Poor data labeling quality resulting in an inaccurate model.

- Insufficient data volume to train a robust model.

- Data poisoning or adversarial attacks on the training set.

- Model & Algorithm Risks:

- Model performance degrades over time (model drift).

- Lack of model interpretability (“black box”) prevents auditing and trust.

- Unintended negative feedback loops (e.g., a recommendation engine that narrows user choice over time).

- Model fails catastrophically on edge cases not seen in training.

- Security vulnerabilities allowing for model extraction or adversarial attacks on the deployed model.

- Technical & Infrastructure Risks:

- Inability to scale the model to handle production-level traffic.

- High and unpredictable cloud computing costs for training or inference.

- Complex integration with existing legacy systems.

- Dependency on a single vendor for a critical part of the MLOps stack.

- People & Process Risks:

- Lack of required skills (e.g., MLOps, data engineering) within the team.

- Unrealistic expectations from business stakeholders.

- Poor user adoption due to lack of trust or usability.

- Ethical oversight is treated as an afterthought rather than a core requirement.

- Regulatory & Business Risks:

- The final solution does not deliver the expected ROI.

- The project violates emerging AI-specific regulations.

- Reputational damage from a high-profile model failure.

- The problem the AI is solving is not a high-value business problem.

Step 2: Define Your Impact and Likelihood Scales

To score your risks, you need a consistent scale. A simple 1-to-5 scale for both impact and likelihood works well. The key is to define what each number means in the context of your project.

Impact Scale (Example):

- 1 (Insignificant): Minor internal inconvenience, no impact on users or budget.

- 2 (Minor): Small performance degradation, slight user frustration, negligible cost impact.

- 3 (Moderate): Noticeable feature failure, negative user experience, moderate financial impact or project delay.

- 4 (Major): Significant system outage, major regulatory scrutiny, brand damage, substantial financial loss.

- 5 (Catastrophic): Widespread system failure, severe safety implications, major data breach, legal action, and irreparable reputational harm.

Likelihood Scale (Example):

- 1 (Rare): Extremely unlikely to happen in the project’s lifecycle.

- 2 (Unlikely): Not expected to happen, but possible.

- 3 (Possible): Could happen; has a reasonable chance of occurring (e.g., ~50/50).

- 4 (Likely): Is more likely to happen than not.

- 5 (Almost Certain): Is expected to happen if no action is taken.

Step 3: Score the Risks and Plot the Heatmap

Go through your list of identified risks one by one. As a team, discuss and assign a score for both Impact and Likelihood. This discussion is often as valuable as the final output itself, as it forces alignment and uncovers different perspectives. For example, an engineer might score a technical risk’s likelihood higher, while a product manager might have a better sense of its potential impact on users.

Once each risk has an (Impact, Likelihood) pair, you plot it on your heatmap grid. A risk scored as (Impact=4, Likelihood=4) would land squarely in the red zone, while a (Impact=2, Likelihood=2) risk would be in the green.

Step 4: Develop Mitigation and Contingency Plans

The heatmap is not a trophy to be admired; it’s a call to action. For every risk, especially those in the red and yellow zones, you must define a mitigation plan. This involves answering the question: “What are we going to do to reduce the likelihood or impact of this risk?”

Consider the risk of “Systemic bias in training data” (e.g., Impact=5, Likelihood=3).

- Mitigation Strategy: Proactively implement a multi-faceted approach. This could include conducting a thorough exploratory data analysis (EDA) with fairness metrics, using data augmentation techniques to balance classes, engaging diverse human annotators for data labeling, and utilizing fairness toolkits (like Google’s What-If Tool or IBM’s AIF360) to audit the model before deployment. Assign an owner responsible for overseeing these actions.

For some risks, you might also create a contingency plan: “If this risk occurs, what is our immediate response plan?” This is crucial for high-impact risks where mitigation might not be foolproof.

The Heatmap as a Living Document

Perhaps the most critical rule of using an AI risk heatmap is that it is never “done.” An AI project is a journey of discovery. The risk landscape will change continuously as you acquire new data, experiment with different models, and get user feedback.

Your heatmap should be a living document, integrated into the rhythm of your project. Review and update it at key milestones: at the end of each sprint, before a major release, or when a significant assumption changes. This regular review keeps risk management at the forefront of the team’s consciousness and ensures you’re always focused on the most significant threats to your project’s success.

By adopting this dynamic approach, you transform risk management from a static, check-the-box exercise into a powerful strategic tool. It fosters a culture of proactive awareness, encouraging teams to ask “what could go wrong?” not out of fear, but as a constructive step toward building more robust, reliable, and responsible AI. While the path of AI development is inherently uncertain, an AI Project Risk Heatmap provides the compass you need to navigate it successfully, turning potential pitfalls into well-managed challenges and delivering on the true, transformative potential of your work.

Category:

Get a FREE

Proof of Concept

& Consultation

No Cost, No Commitment!